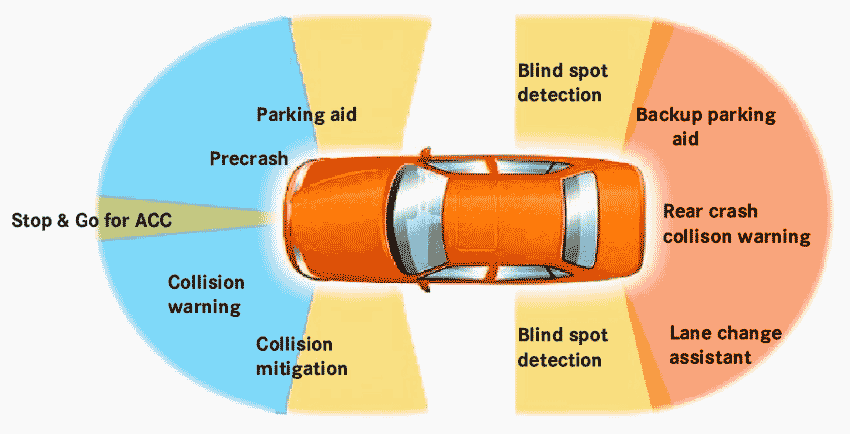

Each sensor used in an ADAS-equipped vehicle has strengths and weaknesses:

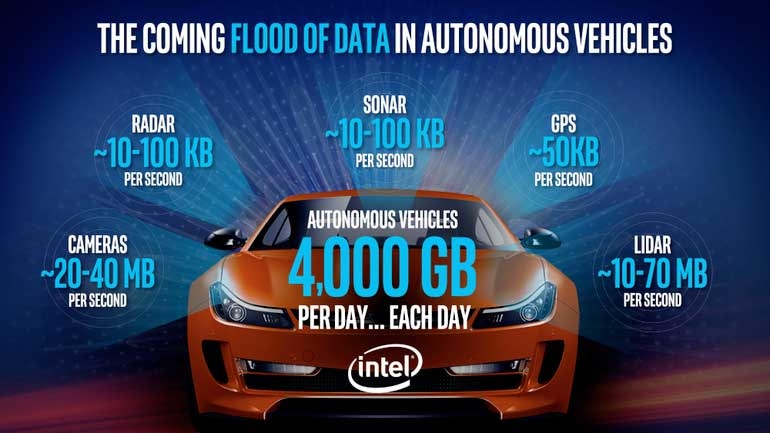

- LiDAR is excellent for 3D perception and works well in darkness, but it cannot see color. LiDAR can detect very small objects, but its performance degrades in smoke, dust, rain, and other atmospheric conditions. It requires less downstream processing than cameras, but is usually more expensive.

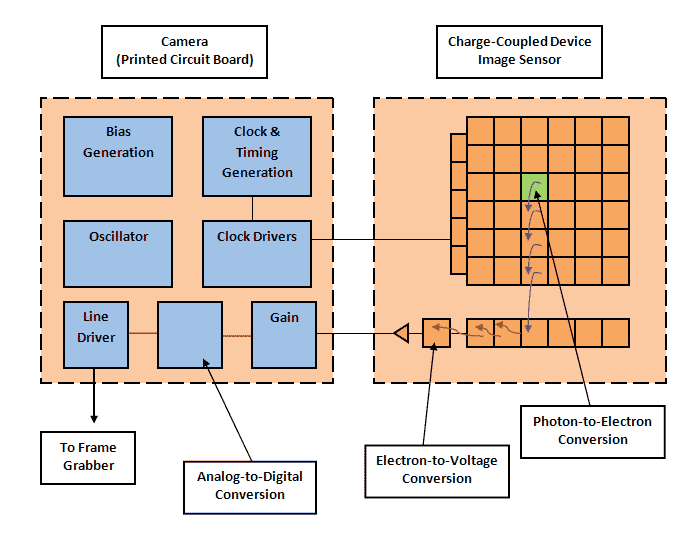

- Cameras can determine whether a traffic light is red, green, or amber. They are very good at “reading” signs and seeing lane markings and other road indicators. But they are less effective in darkness or when the atmosphere is dense with fog, rain, or snow. They also require more processing than LiDAR.

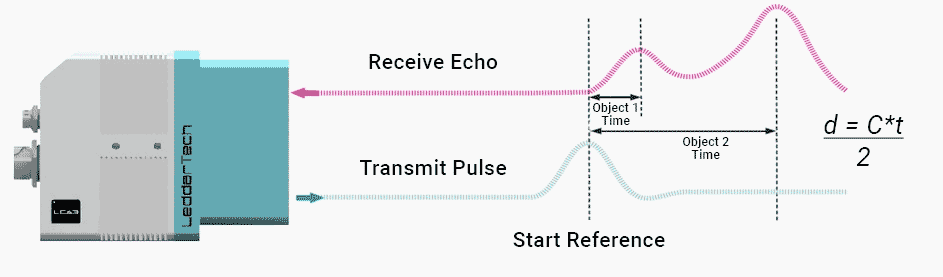

- RADAR can see farther down the road than other ranging sensors, which is essential for high-speed driving. It works well in darkness and when the atmosphere is obscured by rain, dust, and fog. However, it cannot create models as precise as cameras or LiDAR, nor can it detect very small objects as well as some other sensors.

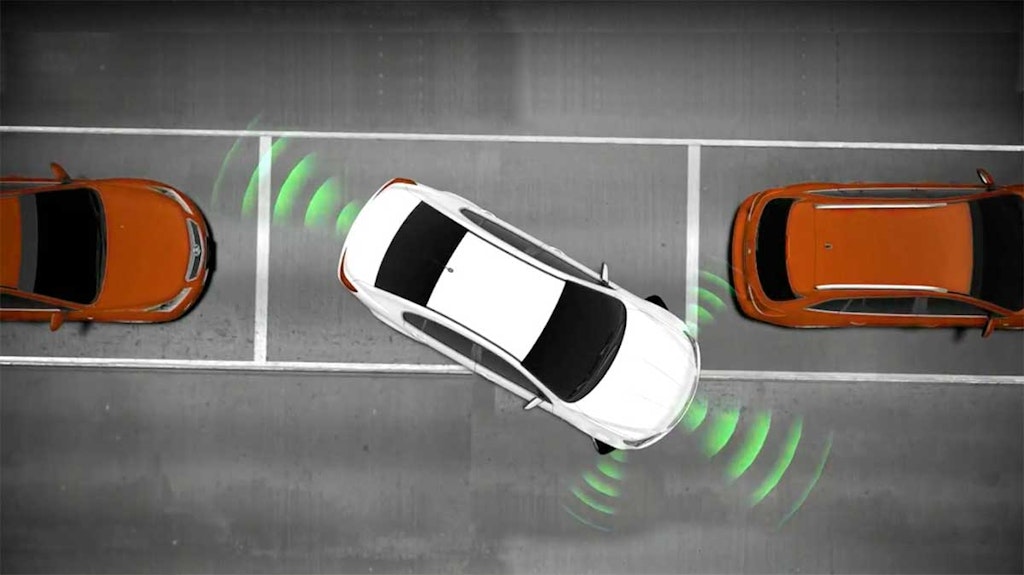

- SONAR is excellent for close-range distance measurement, such as parking maneuvers, but not suitable for long-range measurement. It can be disturbed by wind noise, so it is not effective at high vehicle speeds.

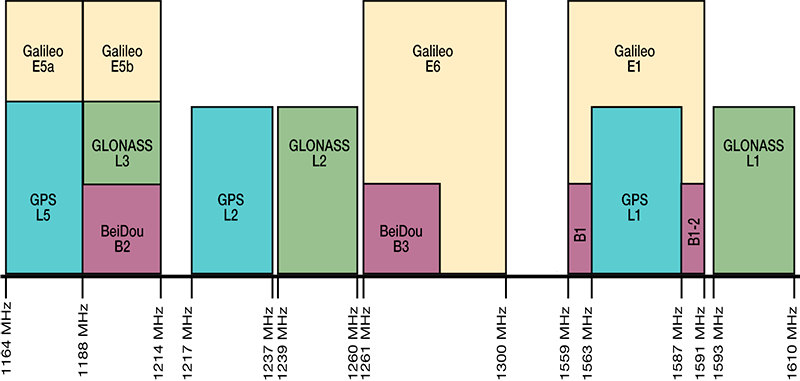

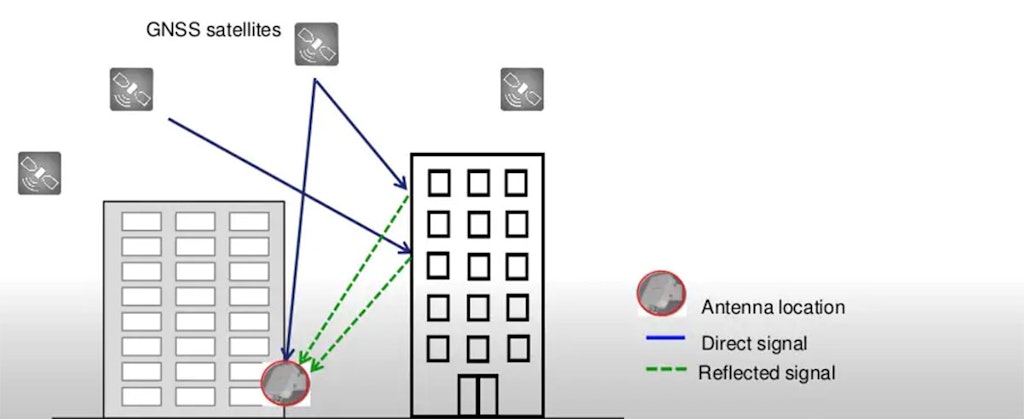

- GNSS, combined with frequently updated map databases, is essential for navigation. But raw GNSS accuracy of one meter or more is not sufficient for fully autonomous driving, and without line of sight to the sky it cannot navigate at all. For automated driving, it must be integrated with other sensors, including IMU, and enhanced with RTK, SBAS, or GBAS.

IMU systems provide the dead reckoning that GNSS systems need when the line of sight to the sky is blocked or corrupted by multipath signals in an “urban canyon.”

These sensors complement each other and allow the central processor to create a three-dimensional model of the environment around the vehicle, so it can determine where to go and how to get there, follow driving rules, and respond to both expected and unexpected events on roads and in parking areas.

In short, we need all of them, or some combination of them, in order to achieve ADAS and ultimately autonomous driving.

Summary

When you were a child, did you ever imagine that your family car would one day be equipped with RADAR and SONAR like an aircraft or submarine? Did you imagine flat-panel displays dominating the dashboard and navigation systems connected to satellites in space? It sounds like science fiction, yet today all of that and more has become reality.

ADAS is one of the most important development directions taking place today. Of course, the parallel development of hybrid and electric vehicles is also critical in reducing greenhouse gases and fossil fuel use. But ADAS goes directly to the most important aspect of travel and mobility: human safety.

Because more than 90% of road accidents, injuries, and fatalities are caused by human error, every advance in ADAS has a clear and direct effect on preventing injury and death.